AI Threat Detection: How Machine Learning Catches What Rules Miss

Why traditional rule-based and signature-dependent security systems fail against modern threats, and how AI-powered behavioral detection catches attacks that rules miss entirely.

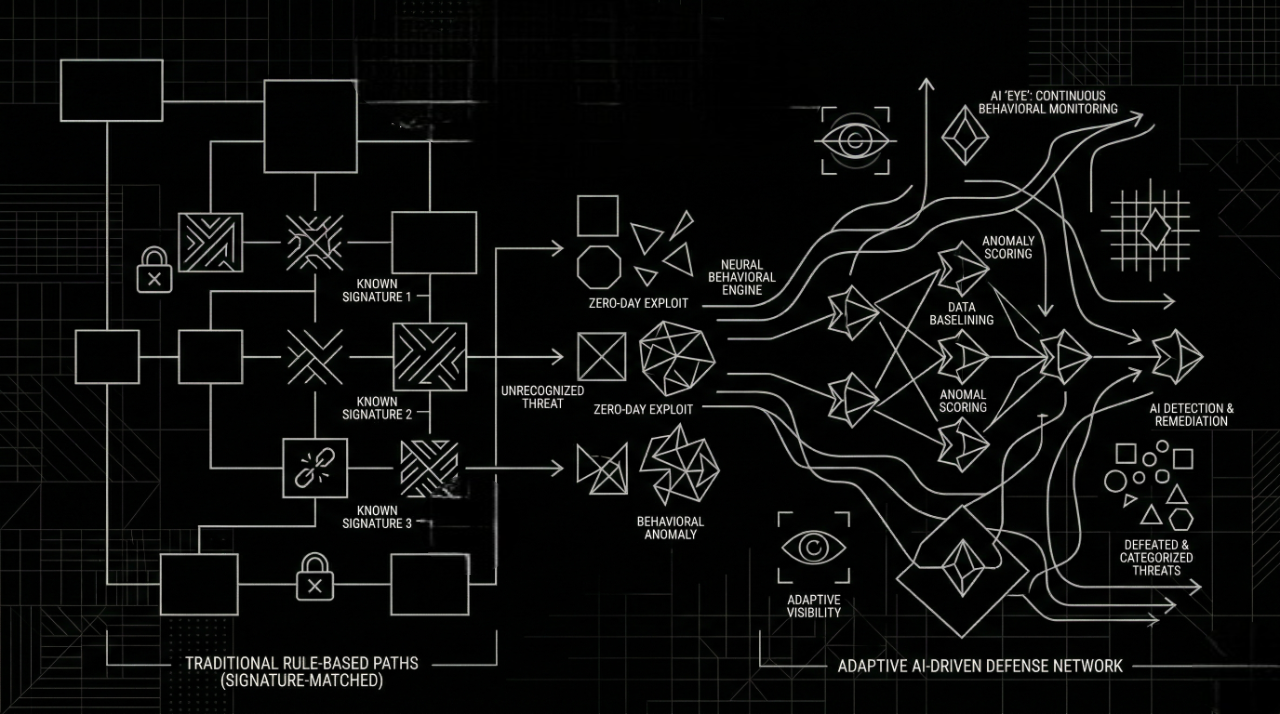

AI threat detection uses machine learning to analyze behavioral patterns and detect anomalies in real time, identifying zero-day exploits, phishing campaigns, insider threats, and data exfiltration attempts that signature-based, rule-based security systems are fundamentally blind to.

The Night No Alert Fired

At 2:47 AM on a Tuesday, a major Indian financial institution's security operations center was quiet. No alerts. No anomalies. No flags.

Attackers had already been inside the network for 214 days.

They moved slowly and deliberately, reading internal emails, mapping database schemas, and siphoning customer records in small batches that stayed below every detection threshold. By the time an analyst noticed something unusual in a routine log review, it was too late. Three million customer records were gone.

The security stack in place? Industry-standard SIEM. Next-generation firewall. Signature-based endpoint protection.

None of it worked. Not because the tools were defective, but because they were designed for a different era of attack.

According to the IBM Cost of a Data Breach Report 2024, the average data breach takes 258 days to identify and contain (194 days to identify and 64 days to contain). The report found that the global average cost of a data breach reached 2.35M USD) in 2024, reflecting a steady increase in breach-related losses. Meanwhile, global cybercrime is projected to cost $10.5 trillion annually by 2025, according to Cybersecurity Ventures.

The threat landscape has fundamentally changed. The tools protecting most organizations have not.

This article breaks down exactly why rule-based security fails and how AI and machine learning are catching what the rules miss.

Why Rule-Based Security Is Losing the War

Static Rules, Dynamic Threats

Traditional security systems operate on a single, simple premise: define what "bad" looks like, then alert when it appears.

Write a rule. Detect the signature. Block the pattern. Repeat.

This model worked when attackers relied on predictable malware families and well-worn attack playbooks. Today, that model is a speed camera that only photographs one specific car model while the rest of the highway races by unchecked.

The fundamental problems with rule-based detection:

-

Signature dependency: Signatures are written after threats are discovered. Zero-day exploits, by definition, have never been seen before. No signature exists.

-

Alert overload: Large organizations generate billions of security events daily. Static correlation rules produce thousands of low-quality alerts. According to Gartner, over 90% of SIEM alerts are never investigated due to analyst bandwidth limitations.

-

Brittle logic: Sophisticated attackers study detection rules. Nation-state threat actors specifically calibrate their activity to stay beneath alert thresholds.

-

No behavioral context: A user downloading 10,000 files at 3 AM might be running a legitimate migration or exfiltrating your IP. A rule-based system sees the action. An AI system sees the context.

The SIEM Gap

SIEM platforms are excellent aggregation and correlation tools. But they are only as good as the rules loaded into them, and those rules are always written in the past, about attacks that already happened.

AI doesn't replace the SIEM. It makes the SIEM actually useful by transforming raw alert volume into a prioritized, high-confidence signal that human analysts can act on.

The Invisible Insider

A finance employee with legitimate database access quietly exports customer records in small increments over six weeks. Always during business hours. Always within their access permissions. Never triggering a rule.

The data is gone. Every action was technically "allowed."

Rule-based systems are permission checkers. AI is a context engine. That single distinction is the gap between breach and defence.

How AI and Machine Learning Detect What Rules Miss

Behavioral Analysis: Security That Thinks

Modern AI threat detection doesn't match against known bad patterns; it builds a behavioral baseline for every user, device, application, and network segment. Once it understands what "normal" looks like, any deviation becomes a detectable signal.

Think of it like a great security analyst who has worked at your company for three years. They know who logs in at 6 AM, who never touches the financial database, and which server never makes outbound connections. The moment something changes, they notice even if no rule exists for it.

AI does this at machine speed, across millions of simultaneous data points.

Behavioral analysis flags:

- Login attempts from impossible geolocations (a user in Mumbai and Singapore within 45 minutes)

- Sudden access to data repositories never touched in the user's history

- Abnormal outbound transfer volumes to external hosts

- Privilege escalation outside normal workflow patterns

- Service accounts behaving like human users

Anomaly Detection: Finding the Signal in the Noise

Anomaly detection uses unsupervised machine learning to identify statistical outliers in telemetry data without any predefined rule.

Two core approaches:

| Approach | How It Works | Best For |

|---|---|---|

| Statistical anomaly detection | Establishes baseline distributions; flags deviations beyond threshold | Volume spikes, rate anomalies, traffic patterns |

| ML-based anomaly detection | Uses algorithms (Isolation Forests, Autoencoders, LSTMs) to model complex multi-dimensional behavior | Behavioral sequences, encrypted traffic, subtle lateral movement |

Pattern Recognition at Scale: Speaking the Attacker's Language

Machine learning models trained on petabytes of threat telemetry recognize attack patterns catalogued by MITRE ATT&CK - even when those patterns are obfuscated, modified, or executed in novel sequences.

A signature matches a specific exploit. An ML model matches the underlying behavior class of an entire attack category.

This means:

- A never-before-seen ransomware variant is detected because it behaves like ransomware (shadow copy deletion, rapid file enumeration, high-entropy writes)

- A living off the land (LotL) attack using legitimate Windows tools is flagged because the sequential use of

certutil,mshta, andpowershellis anomalous in context - A multi-stage APT unfolding over weeks is correlated as a single attack chain because AI maps the entire kill chain across time

Real-Time Learning and Continuous Adaptation

Unlike static rules requiring manual updates, AI models learn continuously.

As your environment evolves - cloud footprint expands, new users onboard, business processes change - the detection model adapts its behavioral baselines automatically. New threat intelligence from global sources is continuously integrated, refining what "suspicious" looks like in both your local environment and the broader threat landscape.

AI vs. Traditional Security: The Full Comparison

| Capability | Rule-Based / SIEM | AI-Powered Detection |

|---|---|---|

| Zero-day detection | Blind — no signature | Behavioral patterns catch exploitation |

| Unknown malware | No match, no alert | Behavioral equivalents detected |

| Insider threat | Actions within permissions = no alert | Behavioral deviation = flagged |

| Alert signal quality | Thousands of low-confidence alerts | Prioritized, high-confidence triage |

| Adaptability | Manual rule updates by analysts | Continuous model retraining |

| False positive rate | 60–90% false positive rate typical | Significantly lower false positive rate |

| Time to detect | Days to months | Minutes to hours |

| Analyst bandwidth needed | High - every alert is manual | Low - AI pre-triages before humans |

| Multi-vector correlation | Limited cross-source stitching | Cross-source, cross-time chain correlation |

| Scalability | Rules degrade at scale | Performance improves with more data |

| Phishing defense | Signature-only email filters | Real-time URL, behavioral, device-layer detection |

| AI attack resilience | Polymorphic AI malware evades trivially | Models adapt to attack evolution |

Real-World Use Cases Where AI Changes the Outcome

Use Case 1: Cross-Platform Phishing Protection

Phishing is no longer just an email problem.

Attackers now deliver phishing links through SMS (smishing), WhatsApp, LinkedIn DMs, Slack messages, collaboration platforms, and malicious QR codes - all channels that traditional email security gateways were never designed to protect.

A finance team member receives a WhatsApp message with a convincing CFO impersonation and a link to a spoofed cloud document. The link bypasses the email gateway completely. The device is not a managed corporate asset. Traditional security sees nothing.

This is why cross-platform, device-agnostic phishing protection is one of the most critical gaps in modern enterprise security.

Metis by Astraq is purpose-built for exactly this challenge — a cross-platform phishing protection system that operates across multiple devices and communication surfaces simultaneously. Metis analyzes:

- URL structure, domain registration age, and SSL anomalies in real time, across any channel

- Domain lookalike and impersonation detection catching the difference between

astraqcyberdefence.comandastraqcyberdefence.co - AI-generated phishing content patterns — including LLM-crafted messages designed to mimic trusted internal communications

- Device-level link interception protecting BYOD and unmanaged devices that fall outside traditional MDM coverage

With phishing representing the entry point for over 90% of cyberattacks according to Verizon's 2024 DBIR, cross-platform protection is not a nice-to-have. It's urgent infrastructure.

Use Case 2: Enterprise Data Privacy — Preventing Breaches Before They Start

AI has introduced a threat vector that barely existed five years ago: internal AI systems themselves becoming data exfiltration channels.

Here's the scenario: An enterprise deploys an AI assistant to help employees draft reports, answer questions about internal documents, and summarize meeting notes. Employees begin feeding the AI sensitive contracts, customer PII, financial projections, and confidential product roadmaps — because it helps them work faster.

The AI system processes and learns from all of it. And if it's hosted outside the enterprise's controlled environment, that data has effectively left the building. Even worse, poorly configured AI can hallucinate confidential information — outputting real customer names, real financial figures, real internal data in responses to completely unrelated queries.

This is the privacy-first enterprise AI problem: the risk isn't just hackers breaching your perimeter. It's your own tools leaking your own data.

Phoebe by Astraq is built to solve this. Phoebe is a RAG (Retrieval-Augmented Generation) based enterprise intelligence system designed from the ground up with privacy-first architecture:

- Private data stays private: Phoebe processes enterprise knowledge entirely within controlled infrastructure; no data leaves to external AI providers

- Hallucination prevention by design: Unlike general-purpose LLMs, Phoebe's RAG architecture grounds every response in your verified internal knowledge base, dramatically reducing AI hallucination risk

- Prevents data breach via AI channels: Phoebe enforces knowledge access controls at the retrieval layer — employees only get AI-assisted answers from data they're authorized to see

- Audit trails on AI interactions: Every query and response is logged, enabling compliance with DPDP, GDPR, and internal data governance policies

For enterprises deploying AI internally, Phoebe answers a question that general-purpose AI assistants cannot: How do you get the productivity benefits of AI without accepting the data privacy risks?

Think of Phoebe as the difference between handing every employee a public internet search and giving them a private, company-specific intelligence system that knows what it's allowed to say and to whom.

Use Case 3: Proactive Security — Building a Team That Can Fight Back

Detection tools are only as effective as the humans operating them. And here lies an underappreciated dimension of the cybersecurity gap: most organizations do not have a security team that has ever practiced defending against a real attack.

Tabletop exercises describe scenarios. Penetration tests simulate attacks on pre-defined scopes. Neither builds the genuine muscle memory that comes from actively defending a live environment under pressure.

Capture The Flag (CTF) competitions are the gold standard for hands-on security training, but running a CTF at scale is operationally complex. Most organizations lack the infrastructure to host challenges, manage teams, track progress, and scale competitions across universities, departments, or partner organizations.

Athena by Astraq is a scalable CTF platform that enables organizations and universities to design, host, and run Capture The Flag competitions at any scale.

Who Athena is built for:

- Enterprises running internal red team/blue team training programs or onboarding security analysts

- Universities and colleges hosting cybersecurity competitions for students and faculty

- Governments and defense organizations running certified security training programs

- Security conferences and communities organizing open CTF events

What Athena enables:

- Scalable challenge infrastructure: Deploy CTF environments for 50 or 5,000 participants without manual setup overhead

- Multi-category challenges: Web exploitation, reverse engineering, cryptography, forensics, OSINT, and more

- Real-time scoreboard and team management across all participants

- Customizable challenge environments: Organizations can build challenges from their own real-world threat scenarios

The connection to AI threat detection is direct: organizations that regularly run CTF training develop security teams with practiced, scenario-tested instincts. When an anomaly surfaces in a production environment, those analysts have been in the room under pressure before. They know what to do.

Building detection capability without building analyst capability is a half-measure. Athena completes the loop.

Use Case 4: Zero-Day Attack Detection

Zero-day vulnerabilities — flaws unknown to the vendor and therefore unpatched — are among the most dangerous threat categories. In 2024, CISA tracked 87+ actively exploited zero-day vulnerabilities, a significant year-over-year increase.

Because no signature exists for a zero-day exploit, rule-based systems have no defense. AI-powered behavioral detection identifies the effects of exploitation — unusual process spawning, unexpected outbound connections, anomalous memory access — even when the specific exploit has never been seen.

Use Case 5: Supply Chain and Third-Party Risk

With rising cyberattacks in India targeting software supply chains, and global enterprises increasingly reliant on third-party dependencies, AI threat detection extends to monitoring software integrity, third-party access patterns, and update mechanism integrity.

According to CrowdStrike's Global Threat Report 2024, supply chain intrusions increased 200% year-over-year, with sophisticated actors embedding persistent access in trusted software update pipelines.

Why It Matters Now: The Urgency Is Real

Adversaries Are Already Using AI

This is not a future scenario. It is the current state.

Nation-state APT groups and professional cybercriminal organizations are deploying:

- AI-generated polymorphic malware - self-modifying code that rewrites its own signature on every infection to defeat antivirus

- LLM-powered spear phishing - perfectly personalized, grammatically flawless phishing emails generated at scale targeting specific individuals

- Automated zero-day discovery - AI-driven vulnerability research that finds and weaponizes flaws faster than any patch cycle

- Adversarial AI probing - automated systems that probe detection tools to map their blind spots and calibrate evasion

If attackers are using AI, defending with rules is not a gap — it's a surrender.

The Autonomous SOC Is Becoming Standard

Global enterprises are transitioning from reactive, staff-intensive SOC operations to AI-powered autonomous SOC architectures. Gartner predicts that by 2028, AI will autonomously handle 40% of all security incident triage currently performed by human analysts.

With rising cyberattacks in India, ransomware targeting critical infrastructure, state-sponsored espionage against defense and finance sectors, and phishing campaigns exploiting rapid digital adoption, Indian enterprises face a threat volume their current analyst headcount cannot absorb.

The answer is not hiring faster. It is augmenting human analysts with AI that triages, correlates, and prioritizes on their behalf — so every investigation hour is spent on the threats that matter.

Astraq Cyber Defence is purpose-built for this reality. Astraq's ecosystem of AI-native security products - Metis, Phoebe, and Athena — addresses the three most critical dimensions of modern enterprise security: detection, data privacy, and team readiness.

Compliance Is Forcing the Issue

Regulatory frameworks are now explicitly requiring AI-augmented, behavioral security monitoring:

-

India's DPDP Act (Digital Personal Data Protection Act) creates significant liability for organizations that fail to implement adequate technical controls to protect personal data. AI-level behavioral monitoring is increasingly what "adequate" means.

-

RBI Guidelines for Indian financial institutions explicitly require advanced, continuous threat monitoring capabilities across all digital channels

-

NIST Cybersecurity Framework 2.0 (released in 2024) explicitly incorporates continuous monitoring and AI-augmented detection as core governance controls

-

ISO 27001:2022 updated to include threat intelligence and behavioral monitoring as explicit implementation requirements

-

SEBI Cybersecurity Framework for market infrastructure institutions mandates advanced threat detection and incident response capabilities

Non-compliance is no longer just a security risk. It is a legal and reputational one.

What Your Organization Should Do Today

Immediate Actions This Quarter

-

Map your detection coverage against MITRE ATT&CK — identify which attack technique categories you have zero visibility into. Most organizations are blind to at least 40% of the framework.

-

Audit your alert-to-investigation ratio — if your SOC investigates fewer than 20% of fired alerts, you have a signal quality crisis. More rules will not fix it.

-

Assess your phishing exposure across all channels — email gateway coverage is not enough. Run a simulated phishing campaign across SMS, WhatsApp, and collaboration tools to understand actual cross-platform exposure.

-

Review your AI tool data flows — if your organization uses AI assistants, audit what data employees are feeding them and where that data is processed. The risk of internal AI data exfiltration is growing faster than most security teams are tracking.

-

Run a CTF training event — even a half-day internal challenge with Athena dramatically improves your analysts' hands-on detection and response instincts.

The Strategic Transition Roadmap: Rule-Based to AI-Driven

Moving from rule-based to AI-powered detection is not a rip-and-replace. It is a layered augmentation:

Phase 1 - Augment: Layer AI behavioral analytics on your existing SIEM. Improve alert quality without disrupting existing workflows. Begin with your highest-value data repositories.

Phase 2 - Prioritize: Train your SOC team to treat AI risk scores as the primary triage signal. Measure alert-to-investigation ratio monthly; it should improve steadily.

Phase 3 - Extend Surface Coverage: Deploy cross-platform phishing protection (Metis) across BYOD and unmanaged device fleets. Implement Phoebe for controlled, privacy-compliant internal AI usage. Run Athena CTF cycles to build analyst readiness.

Phase 4 - Automate and Optimize: Shift low-risk, high-confidence detections to automated playbooks. Reserve human analysts for complex, multi-stage investigations. Continuously retrain models on your environment's evolving behavioral profile.

Conclusion: Adapt or Fall Behind

The 2026 cyber threat landscape is defined by one dynamic: attackers adapt faster than defenders.

AI-powered attackers are already in the field, writing polymorphic malware, crafting LLM-generated phishing campaigns, and probing your detection tools for blind spots. Rule-based systems were not designed to fight this.

AI threat detection shifts the balance. It gives your security team the behavioral intelligence to find threats that have no signature, the data privacy controls to prevent AI from becoming an exfiltration channel, cross-platform phishing protection that follows the attacker wherever they deliver the payload, and the training infrastructure to build the human capability to act on every signal.

For Indian enterprises and global organizations navigating an AI-speed threat environment, the question is no longer whether to modernize security. It is how fast.

The longer you wait, the longer attackers have to be inside without you knowing.